Table of Contents

Speech to Text

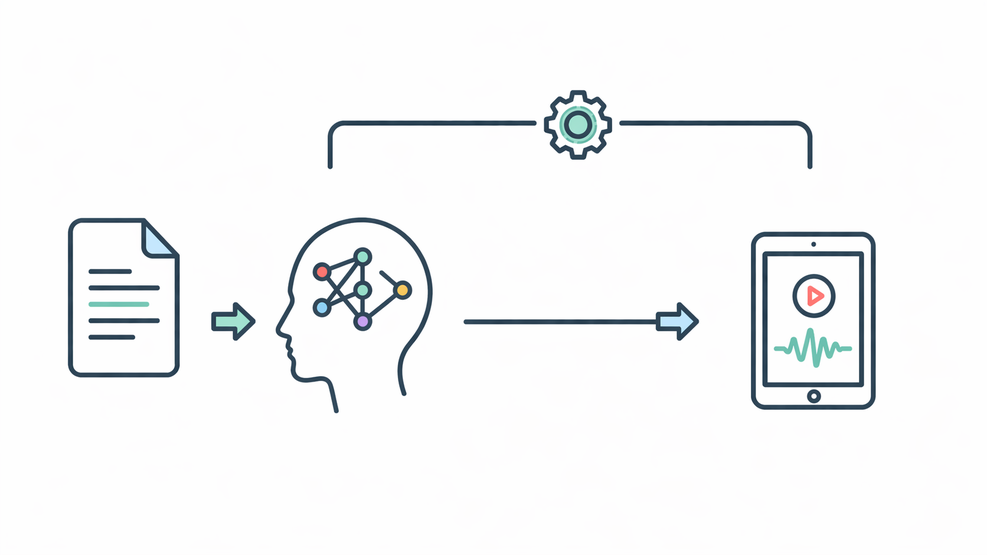

This module converts uploaded speech recordings into structured text, supporting platform growth as a scalable web system by making spoken information searchable, reusable, and manageable.

Written date: 05/02/2026 15:48:24Digital Suite

Introduction

This Speech-to-Text (STT) module is engineered as a high-performance microservice within the HUST Media ecosystem. Designed for scalable platforms, it converts unstructured audio into accurate, structured text. As a core component of data pipelines, it automates transcription and indexing for production environments.

Practical Notes

The implementation leverages advanced speech recognition on a dedicated Flask AI server. It utilizes standardized server-side structures (e.g., /opt/hustmedia/python) and environment-based configurations. This setup serves as a blueprint for developers integrating AI-driven transcription into high-traffic, scalable architectures.

Who should use this module?

- Developers integrating automated audio transcription into high-traffic data pipelines.

- Architects seeking a production-ready STT microservice for scalable content management.

- Engineering teams optimizing unstructured audio processing with stable, low-latency AI models.

How My Vietnamese Speech-to-Text Pipeline Runs on a Flask AI Server

Short description for the article card

This article explains how my Speech-to-Text module processes Vietnamese audio on a Flask AI server, from file input and waveform normalization to transcription output. It also outlines the current model choice, API behavior, and practical runtime limits.

Article body

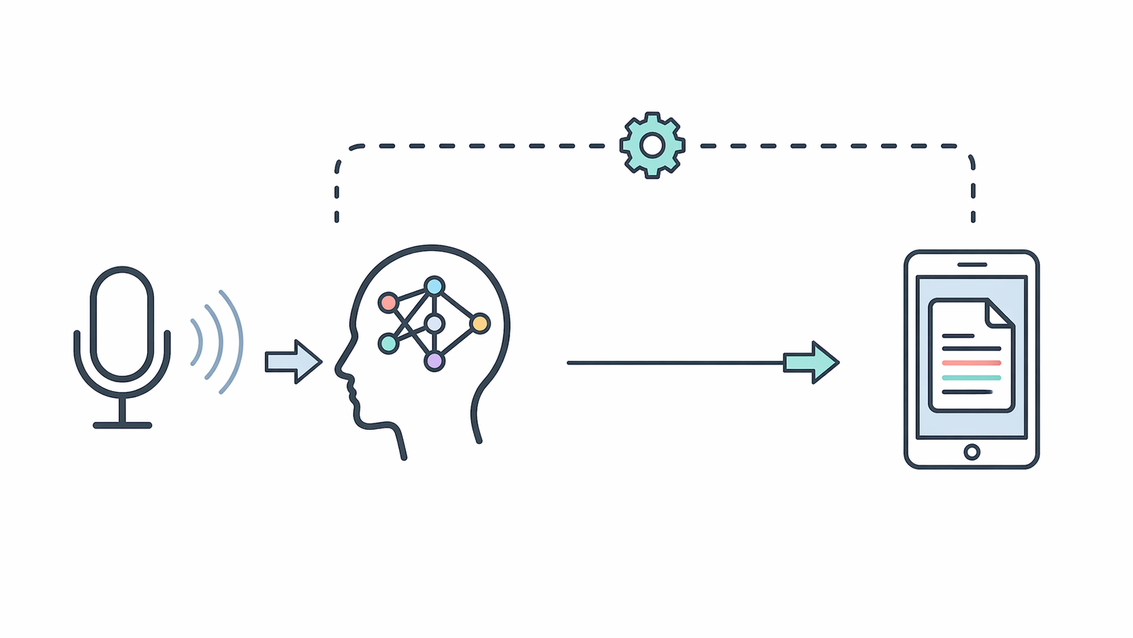

My Speech-to-Text module runs on the same Python Flask AI server used for the other media utilities in this workflow. The main API entry point is GET/POST /wav2vec2, served through Flask on port 8789. In the current version, the endpoint reads an audio path from form data or query parameters, and if no path is provided, it falls back to a default local file. The response returns JSON with status, transcript, and the processed file path.

The transcription engine is based on the Hugging Face model khanhld/wav2vec2-base-vietnamese-160h. Both the processor and model are loaded once when the module starts, rather than reloading on every request. The runtime device is selected automatically, using GPU when CUDA is available and CPU otherwise. This keeps repeated requests more stable, although it also means the service keeps a memory footprint while running.

For audio processing, the file is loaded with librosa, converted to mono, and resampled to 16 kHz, which matches the model input. A normalized intermediate WAV file can also be written to /opt/hustmedia/python/tts/wav2vec2/run.wav for inspection or reuse. Before inference, the waveform is converted to float32, checked to avoid empty input, and normalized by peak amplitude.

Once prepared, the audio is tokenized with sampling_rate=16000 and passed through the model under torch.no_grad(). The output logits are decoded with greedy CTC argmax, then converted into text with batch_decode. In its current form, this module does not use beam search, VAD chunking, or language-model rescoring, so long files are still processed in one pass and may increase latency or memory usage.

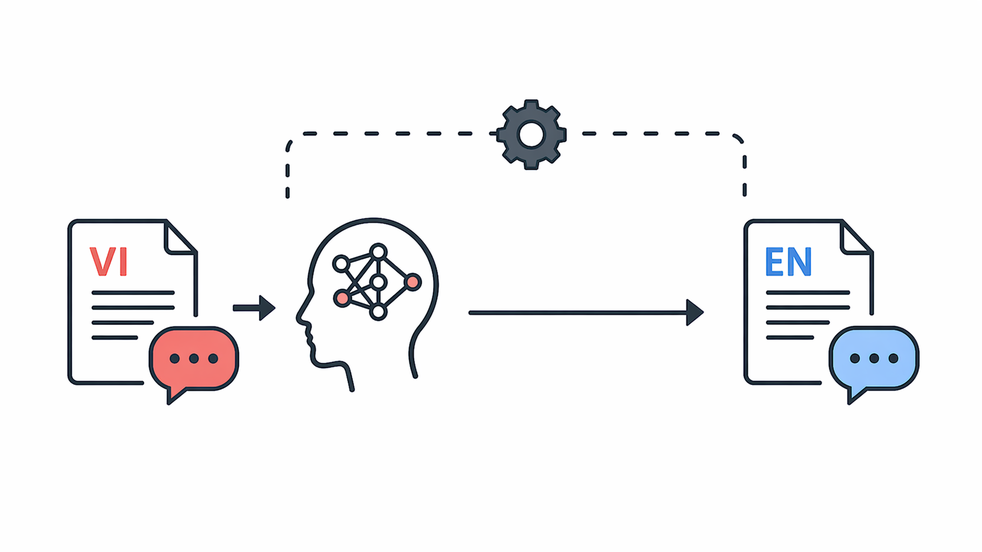

This module is mainly intended for practical Vietnamese transcription tasks such as voice notes, support logs, internal updates, and simple content preparation. It is not designed as a full enterprise ASR platform, but as a working in-house component that I built and maintain for my own workflow.

Technical configuration snapshot

- Server runtime: Flask on port 8789

- Main endpoint: GET/POST /wav2vec2

- STT model: khanhld/wav2vec2-base-vietnamese-160h

- Device selection: automatic GPU / CPU

- Input normalization: mono audio, 16 kHz

- Intermediate WAV path: /opt/hustmedia/python/tts/wav2vec2/run.wav

- Decode method: greedy CTC argmax

- Inference mode: torch.no_grad()

- Current limitation: no chunking, no VAD, no beam search

Practical Use

Module Usage Guide

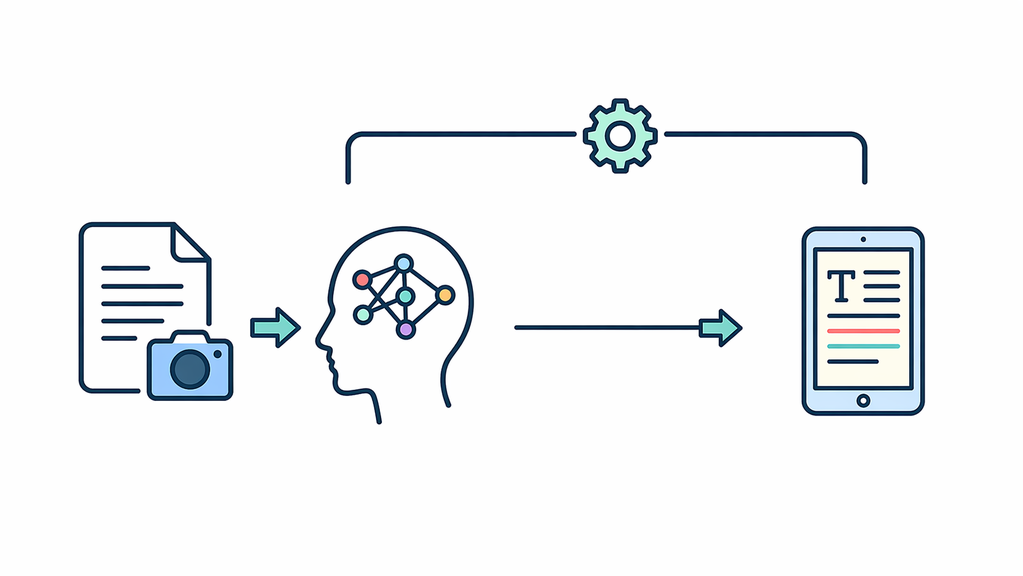

After the technical overview above, this guide explains how to use the Speech-to-Text module with short voice recordings and supported audio files.

- Upload an MP3, WAV, M4A, or OGG audio file within the duration limit.

- Click Generate Transcript to process the recording.

- Review the returned text for clarity, names, and important terms.

- Use shorter recordings for notes, support logs, or quick reporting tasks.

Use the section below to experience the module directly. Start with a short recording, then adjust the file length based on your workflow needs.

Use the steps below to quickly test this module with your real content.

Audio Transcription

Upload audio to convert it into text

Supported formats: MP3, WAV, M4A, OGG

Duration limit: up to 5 minutes.

No file selected

Sample Inputs

Input: Short voice note. Output: Draft text for reporting.

Input: Customer call recording. Output: Searchable transcript for support history.

Closing Notes

Reader Value

Readers can use this module pattern to turn spoken updates into a more structured text workflow for reporting, documentation, and support tasks. In real projects, that helps reduce manual note-taking, keep transcription handling more consistent, and support stable operation across integrated content flows.

Conclusion

This Speech-to-Text module combines controlled audio input handling, a reusable transcription path, and practical output processing into one maintainable service layer. It keeps the transcription workflow more consistent within the broader system architecture.

Was this content helpful to you?